Talk at the Info Days from Sigs Datacom

Large Language Models (LLMs) like ChatGPT or similar AI systems open up a multitude of possibilities for companies. Whether as a customer chatbot, code assistant, or knowledge database, the potential applications seem limitless. However, significant risks accompany these opportunities. At the INFODAYS conference “Generative AI for Developers”, we spoke alongside high-ranking experts about the risks and uncertainties of using LLMs in applications. We also demonstrated ways to systematically address these challenges.

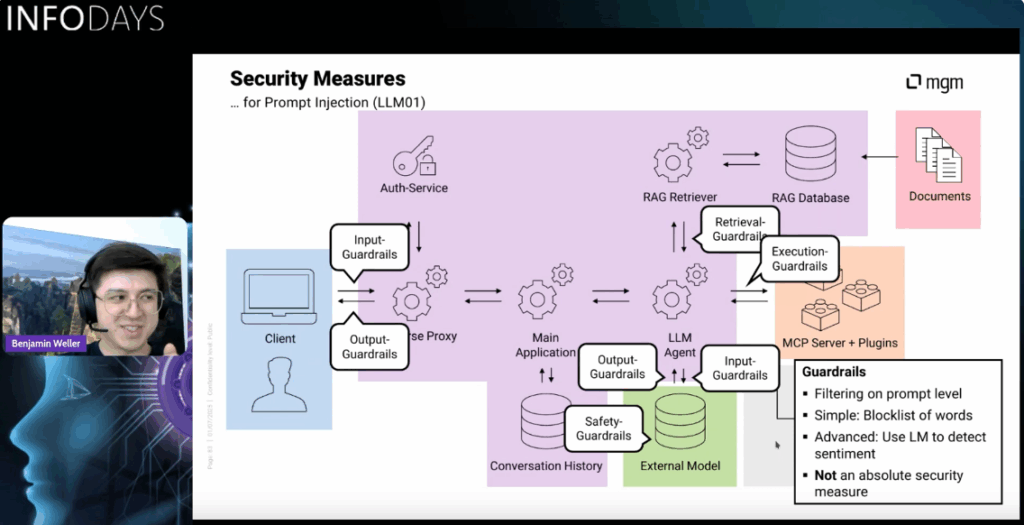

The presentation focused on an LLM application of typical complexity. Sensitive company and user data are integrated using Retrieval-Augmented Generation (RAG), externally hosted plugins are controlled using the Model-Context-Protocol (MCP) and orchestrated by the LLM. Based on this, we used threat modeling to derive assets (resources requiring protection) of the application and identified threats. We were then able to classify these into the established risk catalog of the OWASP Top 10 for LLM applications. Finally, we were able to derive mitigation strategies to secure our LLM application against AI risks and also against conventional application security risks.

Afterwards, we were able to exchange ideas with the participants in an extensive Q&A session and discuss the protection strategies shown. It became clear that the participants were generally aware of the need for security in the LLM area, but there were still uncertainties regarding the details of the protection mechanisms. Consequently, there was genuine interest and numerous inquiries, all of which we were able to clarify. It became particularly clear how important sound knowledge of security and a deep understanding of the LLM components are for assessing risks and protective measures.

We would like to thank you for the invitation to the Infodays and the participants for the great exchange. If you are interested in training or an analysis of the security of your LLM application, please do not hesitate to contact us!