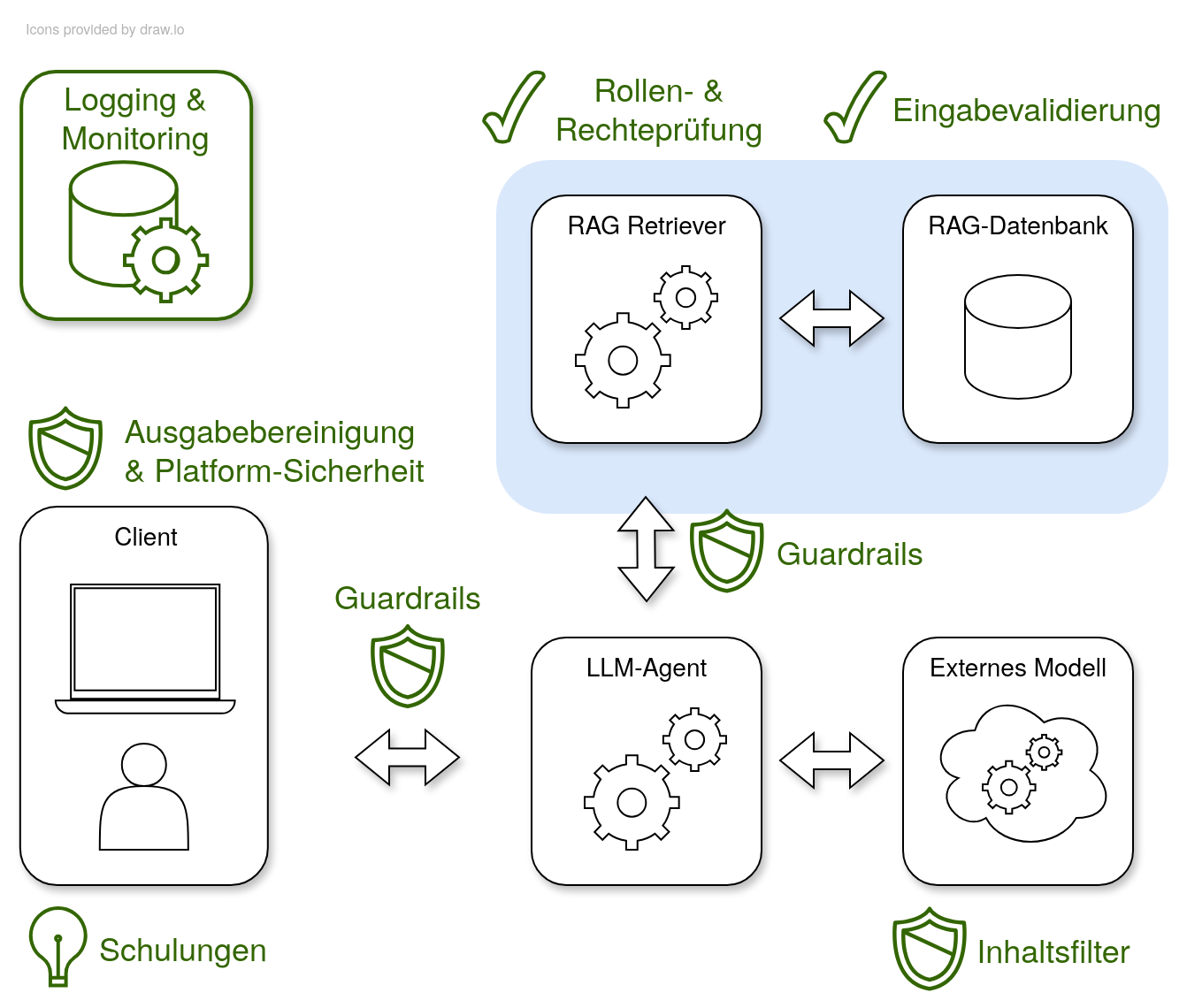

How can companies reliably protect AI-powered applications? In our follow-up article in „JavaSPEKTRUM“, we continue our examination of the secure integration of Large Language Models (LLMs) – this time focusing on concrete countermeasures. Using the OWASP catalog for LLMs and the proven threat modeling process, we provide practical answers to the new technological challenges. The internal chatbot, the central scenario of the article series (introduced in the previous article), remains our common thread: An LLM agent communicates with an external model and accesses company knowledge using a RAG system.

(Further) Risks in everyday business

Let's first consider further risks associated with integrating LLMs into applications: Firstly, the risk of misinformation means that LLMs sometimes deliver plausible-sounding but incorrect outputs. This is usually due to incomplete or outdated training data; if a user relies on this, there are potentially legal or security-relevant consequences. Secondly, the “poisoning” of training data poses risks to the integrity of the model. If public data sources are manipulated, hidden backdoors can get into the model – attackers could use this to deliberately provoke incorrect answers. Thirdly, the high complexity of the supply chain creates new vulnerabilities. External components such as cloud infrastructure or open-source models are difficult to check consistently; the non-transparent origin and subsequent modifications of the models make a comprehensive assessment problematic.

Protective measures in practice

The strategy for securing LLM applications is based on concrete measures, which are presented using examples. First, guardrails increase security against prompt injection. They filter requests for problematic patterns and thus enable the early blocking of manipulative inputs – additional machine learning methods increase the flexibility of these filters. However, it must be said that guardrails alone do not offer absolute protection against prompt injection, but can only provide support. According to the current state of research, it is sometimes even suspected that guardrails cannot conceptually provide 100% protection.

Therefore, additional explicit protective measures must be taken: Fine-grained access controls ensure the secure storage and use of company knowledge. Stored rights in the RAG database and consistent checking of user requests prevent sensitive information from being accidentally released. In addition, the efficiency of detecting and responding to attacks increases through targeted monitoring and logging. Suspicious user activity or conspicuous behavior of the LLM can be detected by logging, so that countermeasures take effect in good time.

If an employee wants to query information from the chatbot, the request is first received by the LLM agent. The RAG retriever is then used, which searches the RAG database for the documents most relevant to the request. This is called semantic search. These are added as context to the employee's request and passed to the LLM. This can then generate a relevant answer using the context.

Proven principles also help to increase the level of security when dealing with user input and generated output. Strict input validation minimizes injection risks into the system. Clear data flows and secure output functions prevent the embedding of malicious content. Example: Only permitted file formats and character sets get into the system; unexpected or malicious content is reliably rejected. Secondly, context-based output cleaning reduces the risk of attacks in the target system. Removing or mitigating dangerous components – such as script tags – protects against exploitation by cross-site scripting. Thirdly, continuous logging and monitoring creates transparency. Consistent logging makes anomalies visible; – practical examples show how companies can react to suspicious activities at an early stage as a result.

Conclusion: With principle and method to secure LLM operation

In conclusion, it can be said that those who consistently monitor the use of LLMs and regularly check the protection mechanisms reduce risks more effectively. A three-stage approach consisting of filtering, access control and monitoring is decisive for security in business processes. Examples from corporate practice prove how security teams create a sustainable security culture through clear responsibilities combined with training. AI-supported applications can thus be operated in a risk-conscious and trustworthy manner – this is the central basis for taking the step into the future.

The article appeared in the September issue of Java Spektrum